Unlock superior time series forecasting with five indispensable cross-validation strategies. Master techniques that ensure realistic evaluation, eliminate data leakage, and prepare your models for seamless production deployment. This guide reveals critical insights into:

- Implementing walk-forward validation for accurate production simulation.

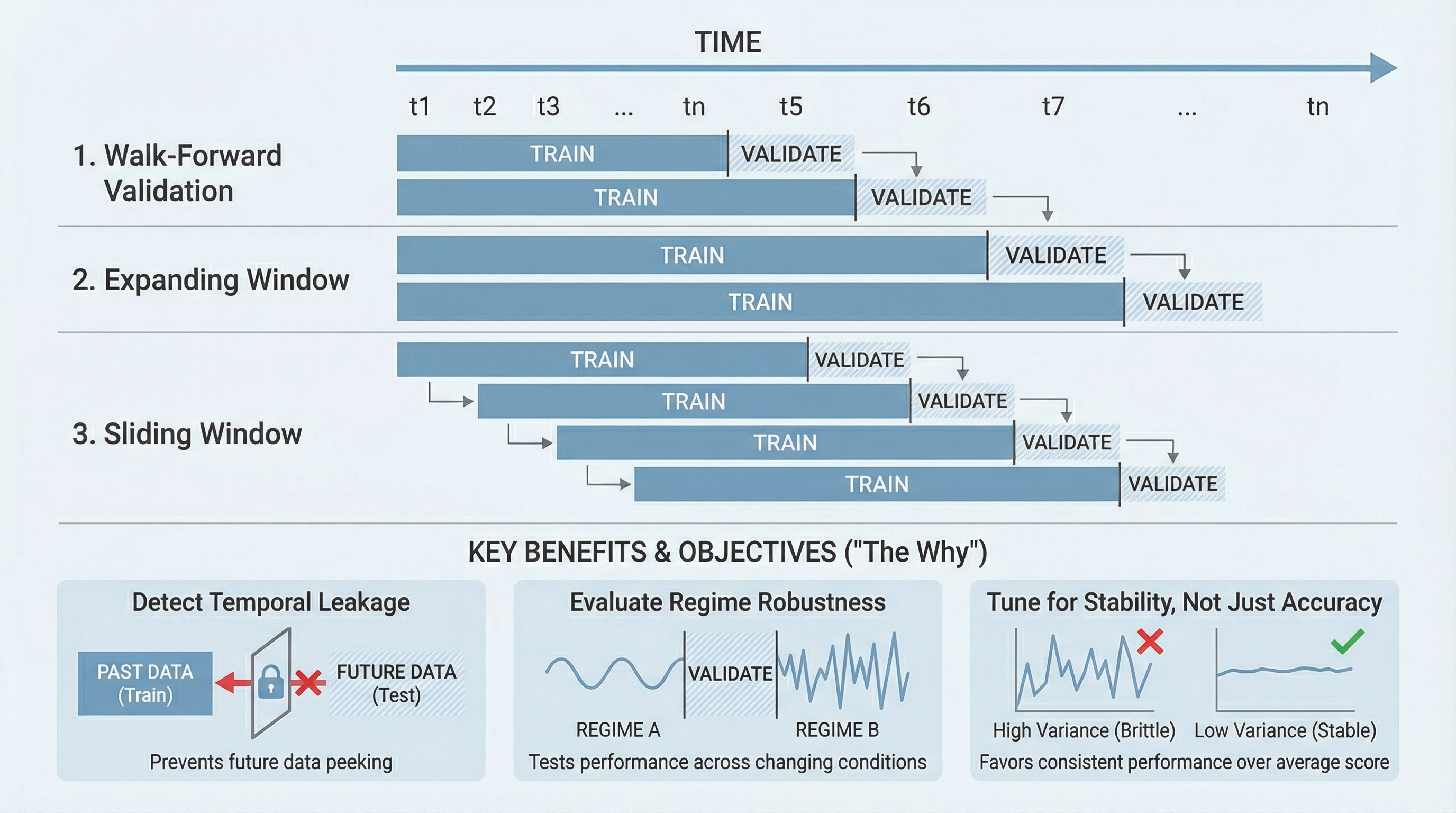

- Leveraging expanding versus sliding windows to optimize memory depth.

- Identifying temporal leakage, verifying robustness across market regimes, and fine-tuning for unwavering stability over peak accuracy.

Embark on this essential exploration of advanced evaluation methodologies.

5 Crucial Ways to Employ Cross-Validation for Enhanced Time Series Models

Image by Editor

Transforming Time Series Evaluation with Cross-Validation

Time series modeling confronts inherent volatility, where models excelling in backtesting frequently falter with novel data. This fragility often stems from inadequate validation methodologies. Conventional random splits, standard cross-validation, and simplistic holdout sets disrupt the critical temporal dependencies that define time series data. While cross-validation remains a potent tool, its application demands a temporal-aware adaptation.

By judiciously integrating temporal considerations, cross-validation emerges as your most potent ally for diagnosing data leakage, bolstering generalization capabilities, and profoundly understanding model dynamics amidst evolving conditions. Effectively implemented, it transcends mere accuracy scoring, forging model credibility under stringent, real-world constraints.

Walk-Forward Validation: The Ultimate Production Rehearsal

Walk-forward validation offers the pinnacle of realism for time series model deployment. This dynamic methodology mandates iterative retraining as time progresses, eschewing static splits. Each partitioning rigorously upholds causality, training exclusively on historical data and validating against immediate future observations. This temporal fidelity is paramount, as time series models typically falter not from insufficient historical signals, but from unpredictable shifts in future data patterns.

This rigorous approach meticulously exposes your model’s sensitivity to subtle data transitions. A model exhibiting robust performance in initial validation folds yet degrading in later ones signals critical regime dependence—an insight imperceptible with a single holdout. Walk-forward validation also definitively clarifies the impact of retraining frequency on performance. Certain models achieve substantial gains with frequent updates, while others demonstrate minimal sensitivity.

Furthermore, this technique imposes practical discipline by compelling early codification of your retraining logic. Essential processes like feature generation, scaling, and lag construction must operate incrementally. Any failure during the forward window progression would inevitably manifest in production. Consequently, validation transforms into a comprehensive pipeline debugging mechanism, extending beyond mere estimator assessment.

Expanding vs. Sliding Windows: Strategic Memory Depth Testing

A fundamental, often implicit, assumption in time series modeling concerns the optimal duration of historical data a model should retain. Expanding windows, by their nature, encompass all preceding data, growing dynamically. Conversely, sliding windows maintain a fixed historical scope, discarding older observations as new ones emerge. Cross-validation empowers you to rigorously test this critical assumption, replacing guesswork with empirical evidence.

Expanding windows typically promote enhanced stability, proving highly effective when long-term patterns predominate and structural shifts are infrequent. Sliding windows, in contrast, offer superior responsiveness, adapting swiftly when recent trends hold greater predictive significance than distant historical data. Neither approach holds universal superiority; their comparative efficacy often emerges only through multi-fold cross-validation.

Evaluating both strategies via cross-validation illuminates your model’s dynamic trade-off between bias and variance. Performance improvements with shorter windows signal that historical data might introduce detrimental noise. Consistent superior performance with longer windows indicates persistent, valuable signal. This comparative analysis also critically informs feature engineering decisions, particularly regarding lag depth and the computation of rolling statistics.

Unmasking Temporal Data Leakage with Cross-Validation

Temporal data leakage represents a prevalent pitfall, inflating time series model performance metrics beyond their true capabilities. Such leakage often infiltrates insidiously through feature engineering, normalization routines, or target derivations that inadvertently incorporate future information. Properly structured cross-validation stands as a premier defense against this pervasive issue.

Suspiciously uniform validation scores across multiple folds frequently serve as a red flag, given that genuine time series performance inherently exhibits fluctuation. Exceptional consistency can strongly suggest the illicit ingress of information from the evaluation period into the training set. Walk-forward splits, enforced with strict temporal boundaries, significantly impede the concealment of such leakage.

Cross-validation further facilitates precise problem localization. Observing a precipitous performance decline subsequent to rectifying split logic confirms the model’s reliance on predictive information from the future. This invaluable feedback loop elevates validation from a passive scoring exercise to an active diagnostic instrument for ensuring pipeline integrity.

Assessing Model Robustness Across Regime Transitions

Time series data rarely operates within a singular, static regime. Economic landscapes evolve, consumer behaviors transform, sensor readings drift, and unforeseen external events fundamentally alter operational dynamics. A solitary train-test split risks capturing data solely from one regime, thereby fostering unwarranted confidence. Cross-validation strategically distributes your evaluation across broader temporal spans, markedly increasing the probability of encountering and assessing performance during regime transitions.

By meticulously scrutinizing performance at the fold level, rather than relying solely on aggregate metrics, you gain profound insight into the model’s adaptive capabilities. Certain folds may demonstrate exceptional accuracy, while others reveal distinct performance degradation. This pattern holds greater diagnostic value than the average score, revealing whether your model exhibits robust adaptability or critical brittleness.

This analytical perspective also critically guides model selection. A marginally less accurate model that exhibits graceful degradation often presents a more reliable choice than a high-performing but brittle alternative. Cross-validation renders these critical trade-offs transparent, transforming evaluation into a rigorous stress test rather than a superficial comparison.

Optimizing Hyperparameters for Stability, Not Just Accuracy

Hyperparameter tuning for time series models is frequently approached identically to tabular data scenarios: optimize a chosen metric, select the highest score, and proceed. Cross-validation, however, unlocks a far more sophisticated and robust approach. Instead of merely identifying the configuration yielding the best average performance, you can pinpoint the configuration that demonstrates consistent temporal behavior.

Certain hyperparameters manifest as volatile performance curves, characterized by significant peaks and troughs. Others deliver remarkably steady and predictable results. Cross-validation starkly highlights this crucial distinction. By examining fold-by-fold performance, you can prioritize configurations exhibiting lower variance, even if their average score is marginally surpassed by more volatile alternatives.

This refined tuning philosophy directly aligns with the realities of production deployment. Models demonstrating stability are inherently easier to monitor, update, and interpret. Cross-validation thus evolves into an indispensable tool for proactive risk management, extending its utility well beyond basic optimization. It empowers you to select models that guarantee reliable performance as data inevitably drifts over time.

Conclusion: Elevating Time Series Forecasting with Strategic Validation

The utility of cross-validation in time series applications is often underestimated, not due to inherent limitations, but because of fundamental misapplication. When temporal sequence is disregarded and treated as a mere feature, evaluation becomes profoundly misleading. However, by meticulously respecting the temporal nature of the data, cross-validation transforms into an exceptionally powerful instrument for discerning nuanced model behavior.

Implementing advanced strategies such as walk-forward validation, comparative window analysis, diligent leakage detection, a keen awareness of regime shifts, and stability-focused hyperparameter tuning all stem from a single, unifying principle: rigorously test your model under conditions mirroring its actual operational environment. Consistent adherence to this principle elevates cross-validation from a perfunctory task to a significant competitive advantage.