Large language models (LLMs) optimized via human feedback now lead the development of intelligent conversational agents. Despite strong benchmark performance, LLM agents often struggle with multi-turn conversational skills, particularly disambiguation. Instead of asking clarifying questions when faced with ambiguity, they frequently overhedge or guess user intents. Acquiring high-quality conversation samples to improve LLMs' dialogue action learning remains a significant bottleneck.

In “Learning to Clarify: Multi-turn Conversations with Action-Based Contrastive Self-Training,” presented at ICLR 2025, we introduce Action-Based Contrastive Self-Training (ACT). ACT is a quasi-online preference optimization algorithm, built upon Direct Preference Optimization (DPO). It enables data-efficient dialogue policy learning for multi-turn conversation modeling. We prove ACT’s effectiveness in data-efficient tuning scenarios across multiple real-world conversational tasks, including tabular-grounded question-answering and machine reading comprehension. Furthermore, we introduce AmbigSQL, a new task designed to improve disambiguating information-seeking requests for complex Structured Query Language (SQL) code generation, facilitating the development of advanced data analysis agents. We also propose evaluating LLMs' conversational agent capabilities by assessing their ability to implicitly recognize and reason about ambiguity. ACT delivers substantial conversation modeling improvements compared to standard tuning methods like supervised fine-tuning and DPO.

A conversational agent capable of disambiguation asks clarifying questions to achieve more accurate final answers when ambiguity arises.

A Novel Perspective on Conversational Reasoning

Conventional neural approaches for building conversational agents typically involve two main components: a dialogue understanding and planning module (e.g., predicting when to ask a clarifying question) and a generation module for executing conversational actions. In the current interaction paradigm, LLMs are frequently adapted for end-to-end conversational applications, bypassing explicit intermediate planning stages. We propose optimizing conversational action planning directly as an implicit subtask of response generation, a paradigm we call implicit action planning.

Training an LLM for downstream tasks includes three phases: pre-training, supervised fine-tuning (SFT) for instruction adherence, and tuning for alignment with human preferences. A common algorithm for this final alignment phase is Direct Preference Optimization (DPO), an off-policy contrastive learning algorithm optimizing probabilities for winning and losing conversation responses. However, these algorithms often fail to adequately address the multi-turn nature of conversations. The proposed ACT algorithm directly tackles these limitations.

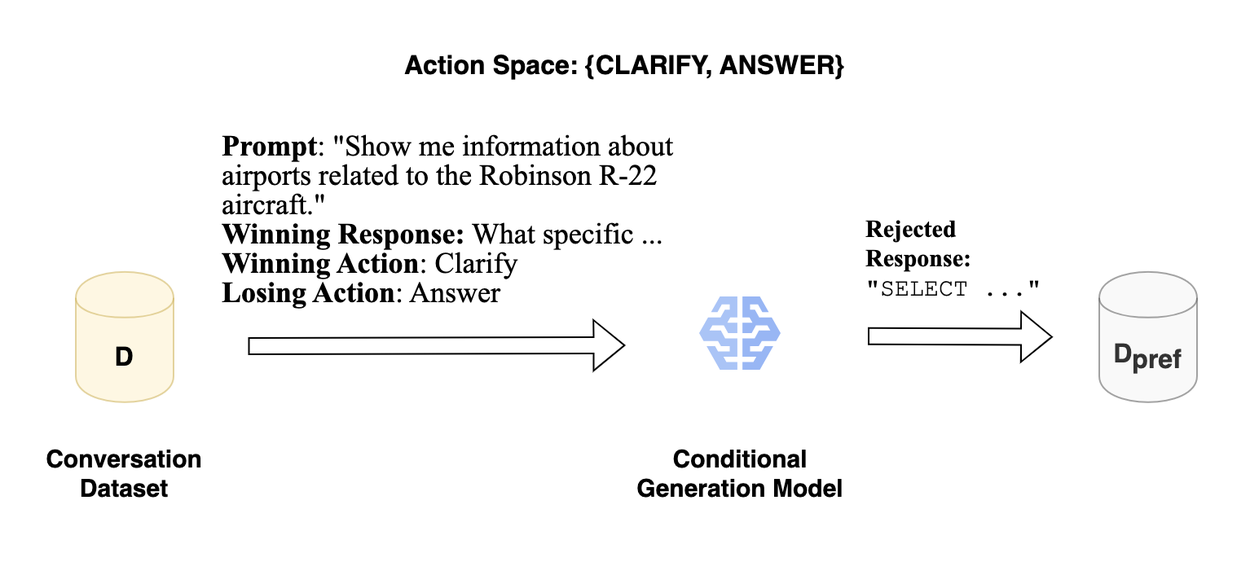

Phase 1: Action-Based Contrastive Data Generation

The initial phase of building ACT focuses on constructing a preference dataset comprising pairs of conversational responses, where one represents a winning action and the other a losing action. We begin with an existing conversational dataset. For each turn, we use the conversation history as part of the input prompt (e.g., “Show me information…”, as depicted in the figure) along with necessary task-specific context (like a SQL database schema). This turn serves as the winning response (e.g., “What specific…,” which expresses a “Clarify” action). We then synthesize a rejected response, representing a converse action (e.g., “Answer”), using a conditional generation model. This stage yields a pairwise dataset with synthetically generated rejected responses.

Overview of ACT's data generation phase.

Phase 2: Contrastive Self-Training

The second phase involves tuning the policy model using the DPO objective. Leveraging the prompts from Phase 1, we implement on-policy learning based on key intuitions:

- DPO-like algorithms optimize the log probabilities of winning and losing responses.

- On-policy response sampling inherently produces high-probability token sequences.

- Effective conversational improvement necessitates multi-turn optimization, which is challenging to capture with single-turn contrast pairings alone.

The accompanying figure illustrates ACT's operation guided by these intuitions. Instead of a direct offline gradient update using fixed contrastive pairs, we employ on-policy sampling. We first verify if the response correctly expresses the intended action (e.g., a clarifying question). If so, we simulate the trajectory's outcome, evaluating it against the original conversation's information-seeking intent. Based on the outcome's correctness, we replace either the winning or losing response from Phase 1's contrastive pair with the simulated multi-turn trajectory.

Overview of ACT's tuning phase. For each initial contrastive pair, we sample an on-policy response from the model. We evaluate the resulting trajectory and update the contrastive pair by replacing either the winning or losing response. The DPO objective then updates the policy.

Elevating State-of-the-Art Multi-Turn Modeling Capabilities

We evaluated ACT using open-weight LLMs on diverse multi-turn conversational datasets: PACIFIC (reasoning over tables and text), Abg-CoQA (reasoning over dense passages), and AmbigSQL (text-to-SQL generation). Our experiments compared ACT against competitive baselines, including supervised fine-tuning with cross-entropy loss (SFT), Iterative Reasoning Preference Optimization (IRPO), and prompting Gemini 1.5 and Claude 3.5 with in-context learning (ICL) examples.

Conversational Question Answering with Tabular Grounding on PACIFIC

The results across all three data-efficient settings for PACIFIC demonstrate ACT's superior performance compared to SFT and Gemini prompting, even when Gemini benefits from additional computation. Specifically, with only 50 conversations for tuning, ACT achieved up to a 19.1% relative improvement over SFT in implicitly recognizing ambiguity, increasing Macro F1 from 69.0 to 82.2. ACT also exhibits significantly improved data efficiency compared to adapter-based SFT with Gemini Pro, achieving a 35.7% relative improvement in multi-turn task performance (from 45.6 to 61.9 in DROP F1). Crucially, tuning with ACT in these limited data settings enables the model to match or surpass frontier LLMs used with in-context learning, despite ACT having zero in-context examples during inference. These findings underscore the importance of on-policy learning and multi-turn trajectory simulation for enhancing multi-turn goal completion.

ACT significantly surpasses standard tuning approaches in data-efficient conversational modeling scenarios.

Our paper provides further results on the PACIFIC corpus, demonstrating ACT's superiority over IRPO, alongside findings on Abg-CoQA and AmbigSQL.

Attributing Performance Gains from ACT

We conducted ablation studies to isolate the benefits of each ACT component:

Ablation study of ACT components on the PACIFIC dataset.

Are action-based preferences essential? A core feature of ACT is its contrastive pairs that highlight differences in conversational actions. Our “ACT w/ Random Actions” experiment, where we randomly sampled both winning and losing actions for preference pairs, shows underperformance compared to standard ACT. This confirms the necessity of action-specific preferences.

Is on-policy sampling critical? In the “ACT w/o on-policy sampling” experiment, we evaluated standard off-policy DPO on the Phase 1 dataset. While it improved over SFT (Macro F1 from 69.0 to 74.8), the gains were significantly smaller than with full ACT's on-policy sampling. This difference likely stems from off-policy negative responses potentially falling outside the policy model's language manifold, making distribution shift a substantial challenge.

Does trajectory simulation matter? ACT’s trajectory simulation aligns it better with multi-turn conversations. Without it, the approach resembles on-policy DPO variants like IRPO but uses a conversation-specific reward signal incorporating actions and task heuristics. Our “ACT w/ sampling w/o simulation” results highlight that trajectory-level simulation is vital for improving multi-turn performance, particularly the policy model's ability to reason about its own clarification questions.

Is ACT model-agnostic? Our main experiments used Zephyr (aligned Mistral 7B). The “ACT with unaligned foundation models” experiment showed a performance gap of 6.5 Action F1 and 4.3 Trajectory F1 after ACT tuning for unaligned models. Nevertheless, ACT demonstrably improves performance irrespective of pre-existing alignment with human feedback, though better initialization helps. Thus, ACT's performance enhancement is model-agnostic.

The Future of Multi-Turn Conversation Modeling

We introduce ACT, a model-agnostic, quasi-online contrastive tuning approach for sample-efficient conversational task adaptation, coupled with an evaluation framework for conversational agents. ACT shows significant effectiveness for task adaptation in low-data regimes. Future research could integrate ACT with advanced tuning methods for complex tasks like text-to-SQL generation, and explore its generalization capabilities in large-scale and multi-task environments.

Acknowledgements

We express our sincere gratitude for valuable feedback on our manuscript from Hanjun Dai, and for advice from Ta-Chung Chi and Kun Qian. We also acknowledge Chris Baron and Vipin Nair for their critical contributions. This work was conducted at Google Cloud AI Research, where Maximillian Chen served as a Student Researcher and Ruoxi Sun as a Research Scientist.